Related Podcast Episode

This is the final post in my five-part Skills Before Tools series based on my K-12 AI Implementation Guide. Each post in this series unpacks one of the five core skills students need to use AI strategically and responsibly. In this post, we will explore ethical awareness and accountability.

Purpose setting and questioning, clarity in communication, evaluation and judgment, and revision and improvement all support students in using AI thoughtfully. Similar to the other skills in the series, ethical awareness and accountability develop over time through explicit instruction, modeling, practice, and reflection. Teaching students to use AI ethically and responsibly is the best way to proactively address concerns about academic integrity.

Academic Dishonesty in the Age of AI

When ChatGPT exploded onto the scene in 2022, there was concern that AI would “kill the traditional essay,” lead to widespread academic dishonesty, and undermine learning. Teachers worried that students would outsource their thinking and rely on AI to complete work meant to develop their skills and demonstrate their learning.

People assumed that generative AI would dramatically increase dishonest behavior, but the research has revealed a more complex picture. Several large-scale studies suggest that rates of cheating have not surged as feared. A Stanford-affiliated survey of students across 40 high schools in the U.S. found that 60-70% of students self-reported cheating in the months after ChatGPT launched. Those numbers are statistically similar to rates of cheating before the release of ChatGPT. Turnitin reported similar findings when assessing the presence of AI in students’ writing assignments. Cheating has remained relatively stable; however, new AI-related forms have emerged.

What appears to be changing is that students are cutting corners. Instead of copying from a friend or pasting from a website, students ask AI to complete parts of an assignment. Some use AI to brainstorm or edit rough drafts, disengaging from the harder cognitive work of generating, organizing, and refining ideas. These shortcuts differ from traditional approaches to cheating, but the underlying issue remains the same. Students are bypassing productive struggle and, as a result, may fail to develop critical conceptual understanding and skills.

The ease with which AI enables students to sidestep the learning process makes teaching ethical awareness and accountability critical if students are to harness the power of these tools to improve their learning.

Why Ethical Awareness and Accountability Are Foundational Skills for AI Use

Ethical awareness and accountability are what ensure that students remain responsible decision-makers when using AI. In my AI implementation guide, this skill pairing focuses on helping students understand that they are accountable for their choices, the work they produce, and the impact that their decisions have on others.

Is This Use of AI Honest, Fair, and Aligned With Expectations?

Ethical awareness is the ability to recognize when and how to use AI in ways that are honest, fair, and aligned with shared academic values. It requires students to pause and consider the potential consequences of their decisions, including misrepresentation, bias, misinformation, and privacy risks.

Accountability builds on that awareness. It requires students to take ownership of their decisions. They need to be able to articulate how they used AI, verify the accuracy of the information they include, and accept responsibility for the final products.

As educators, we can help students develop this habit of reflection by encouraging them to pause and consider questions like:

- Is the way I am using AI honest and aligned with our class expectations?

- Am I doing the thinking and demonstrating the learning that this task was designed for?

- What responsibility do I have for the accuracy and quality of this work?

- How should I acknowledge the role that AI played in this process?

These questions shift the conversation from enforcement to responsibility. Instead of treating AI use as something our students have to hide, we want them to learn that transparency and ownership are non-negotiable parts of the learning process.

Research on AI literacy makes it clear that explicit instruction on issues such as identifying bias, analyzing outputs for misinformation, cross-checking information for accuracy, and considering privacy is critical. When this work is woven into the fabric of a class, students are more likely to develop the critical habits they need to use AI responsibly.

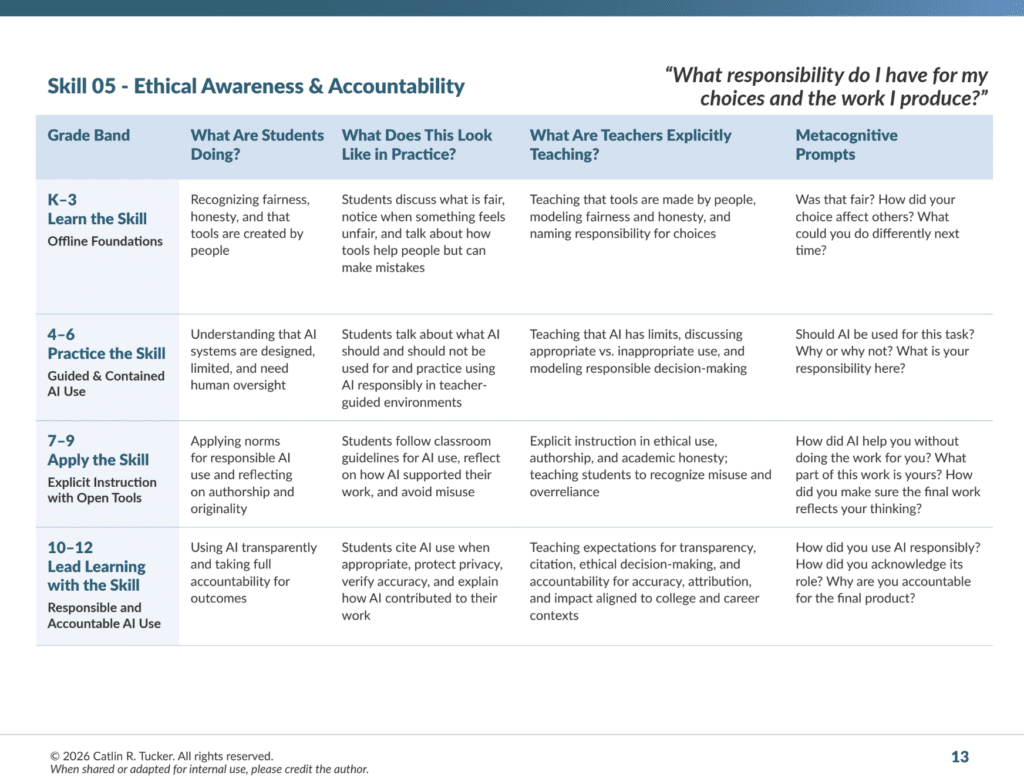

Ethical Awareness and Accountability Across Grade Levels in K–12 Classrooms

Across grade levels, ethical awareness and accountability center on these key questions: Is this use of AI honest? Does it support my learning? What responsibility do I have for the work I submit? As students progress through K-12, their understanding of these questions becomes more nuanced. Younger students begin by learning that tools can be helpful, but they are still responsible for their ideas and actions. Older students develop the capacity to evaluate the ethical implications of AI use, recognize issues such as bias, and take ownership of how they incorporate AI into their workflows.

Grades K–3: Building Early Ideas About Responsibility and Honesty

In the early grades, students are not using AI tools. Instead, teachers focus on building foundational habits that will later support responsible technology use, including honesty, ownership of ideas, and the ability to explain one’s thinking.

At this stage, ethical awareness is grounded in everyday classroom experiences. Students are learning how their actions affect others in the learning community, practicing respect for classmates’ ideas, collaboration, and honesty in their interactions.

They also learn that their ideas matter, that mistakes are part of learning, and that they are responsible for the work they produce. Teachers reinforce that tools and support can help learning, but students must still do the thinking and be honest about how they arrived at an answer.

Teachers may prompt reflection with questions such as:

- How did you figure that out?

- What part of this work shows your thinking?

- Did someone help you with this idea? How?

- Why is it important to be honest about how we solved a problem?

These early conversations establish a sense of responsibility and ownership that prepares students to use AI thoughtfully when they encounter it in later grades.

Grades 4–6: Introducing Ethical AI Use in Guided Environments

In grades 4–6, students begin interacting with AI in structured, teacher-moderated environments. At this stage, ethical awareness focuses on helping students understand that AI systems are designed by people, have limitations, and should be used thoughtfully.

Teachers guide students in discussing when AI may support learning and when it may not be appropriate. This is where a routine like co-creating agreements and having learners examine and discuss scenarios for AI use can be a powerful exercise. Students begin thinking about issues such as bias, accuracy, and responsible use. They also learn that even when AI contributes ideas or explanations, they remain responsible for the work they submit.

Teachers may prompt reflection with questions such as:

- Should AI be used for this task? Why or why not?

- Does this response make sense based on what you already know?

- How did AI help you think about this problem?

- What responsibility do you have for the final work?

These guided experiences help students develop the habit of questioning and reflecting on their use of AI.

Grades 7–9: Navigating Responsible AI Use

In middle and early high school, students begin using a wider range of AI tools for more complex academic tasks. At this stage, ethical awareness shifts from simple rules toward thoughtful decision-making.

Students examine how AI can influence their thinking and learn to recognize situations where relying too heavily on AI may misrepresent their learning. They also begin identifying bias, misinformation, and missing perspectives in AI-generated responses.

Teachers support this work by encouraging students to reflect on questions such as:

- How did AI influence your thinking during this task?

- What decisions did you make about what to keep, revise, or discard?

- Did AI support your thinking or replace it?

- What responsibility do you have for the accuracy of this work?

These conversations help students recognize that responsible AI use requires ongoing judgment and reflection.

Grades 10–12: Practicing Transparent and Accountable AI Use

By high school, ethical awareness and accountability are applied in academic and real-world contexts. Students are expected to make informed decisions about when AI is appropriate, how it should be used, and how its role should be acknowledged.

At this stage, students must take responsibility for explaining their process. They verify information, ensure the final work reflects their own understanding, and acknowledge when AI contributed to the outcome.

Teachers prompt reflection with questions such as:

- Why did you decide to use AI for this task?

- How did you verify the accuracy of the information it generated?

- What parts of this work represent your thinking?

- How did you acknowledge AI’s role in the process?

These expectations prepare students to use AI responsibly in college and career settings.

Teaching Ethical AI Use In Practice

Handing students a set of rules or policies is not enough. Like any other skill we teach in a classroom, responsible AI use requires modeling, guided practice, and reflection. Students need opportunities to think through ethical questions, analyze AI-generated content, make choices about how to use these tools, and reflect on those choices. The strategies below provide concrete ways for teachers to embed this important work in their classrooms.

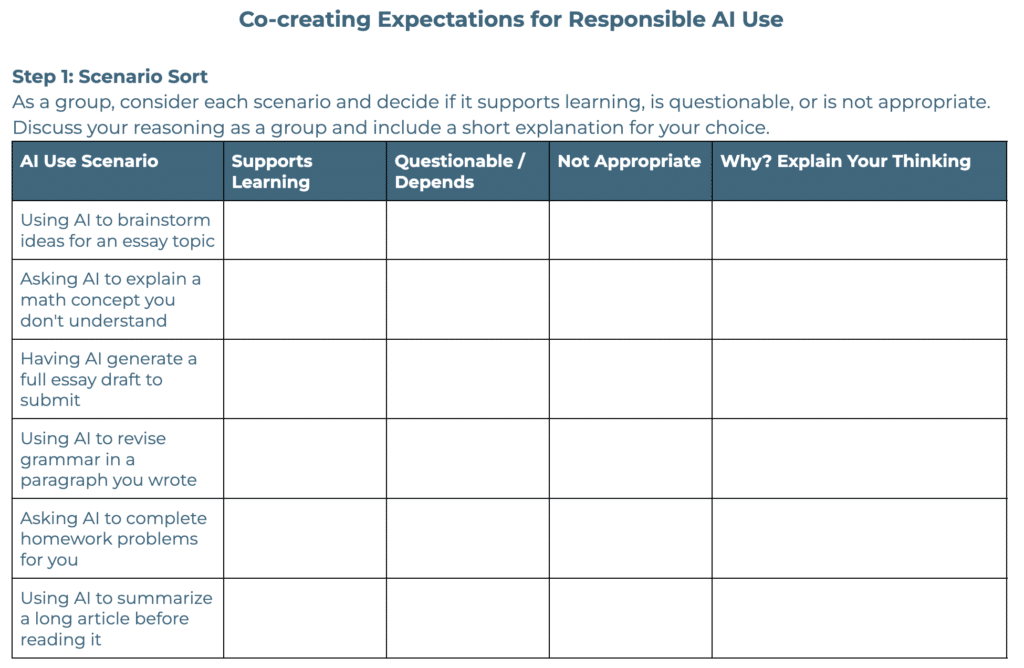

Co-Creating Expectations for AI Use in Classrooms

If a school or district does not have a clear AI policy, one practical place to begin is by co-creating expectations for responsible AI use. This process can make ethical awareness and accountability more visible. Instead of handing students a list of rules to follow, teachers can invite students into a conversation about when AI use supports learning, when it crosses an ethical line, and what transparency should look like in practice. When students have a voice in shaping expectations, they are more likely to understand their value and comply with those expectations.

Below is an overview of the process teachers can use to guide students in co-creating expectations.

Step 1: Sort the Scenarios

Put students in small groups to facilitate discussion and present them with a handful of realistic scenarios. As a group, ask them to read and discuss each scenario to decide if the use of AI feels appropriate, questionable, or inappropriate. For example, students might consider whether it is acceptable to use AI to brainstorm ideas, summarize a complex article, generate a full draft, revise a paragraph, or complete homework problems. These examples give students something concrete to react to and help surface gray areas.

Step 2: Identify Patterns

After sorting the scenarios, ask students to reflect on what they noticed.

Discussion prompts:

- What made certain uses feel acceptable or helpful?

- What made some uses questionable?

- When did AI start replacing thinking instead of supporting it?

- What role does honesty or transparency play?

As patterns emerge, write them on the board. Students may identify ideas like:

- AI can help generate ideas or clarify concepts.

- AI should not do the thinking or complete the assignment.

- Students should be honest about when AI helped them.

- The final work should still represent the student’s understanding.

These patterns become the foundation for the class norms.

Step 3: Draft Classroom Expectations

In their small groups, ask students to turn those patterns into 3–5 clear expectations for AI use in the class. Example prompts for groups:

- AI can be used for…

- AI should not be used for…

- If AI helps you, you must…

- Students are responsible for…

Groups share their ideas and post them for a gallery walk (physical or digital), and the class creates a heat map marking the strongest expectations. Those with the most marks will form the final set of expectations.

Step 4: Create a Class Set of Agreements for AI Use

Turn the final norms into a simple class agreement, such as:

- AI can support brainstorming, clarification, and feedback.

- AI should not replace your thinking or generate work you submit as your own.

- If AI helped you, you must acknowledge how it was used.

- You are responsible for the accuracy and quality of your work. If there is a concern about the authenticity of the work, you will need to explain the work and the role AI played in the process.

Post the agreement and include it in the assignment instructions so students see it when they make decisions about AI use.

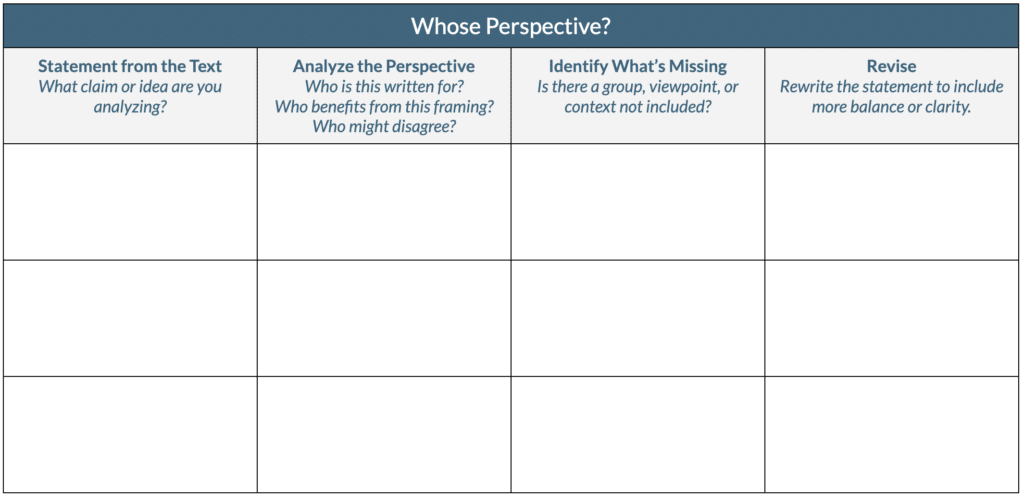

Teachers looking for a template to structure this activity can use the one pictured below.

Identifying Possible Bias

Identifying bias is another aspect of using AI that students may not be familiar with. It is important for students to understand that AI is not neutral. The outputs these systems generate can reflect bias based on how a prompt is phrased or because of the data used to train the AI system itself. AI models are trained on massive amounts of human-created texts, images, and information. As a result, they can reproduce the same biases, assumptions, and gaps that exist in the data they were trained on.

This means AI-generated responses may:

- emphasize certain perspectives while ignoring others

- reflect cultural or historical assumptions

- oversimplify complex issues

- present information confidently even when important context is missing

Because of this, students must learn to approach AI outputs with healthy skepticism. Ethical AI use requires students to question not only whether information is accurate, but also whose perspectives are represented and whose voices might be missing.

One way to build this habit is to have students analyze AI outputs for bias through an activity designed to help them think critically about AI-generated statements, such as Whose Perspective, pictured below.

AI Reflection Wrapper

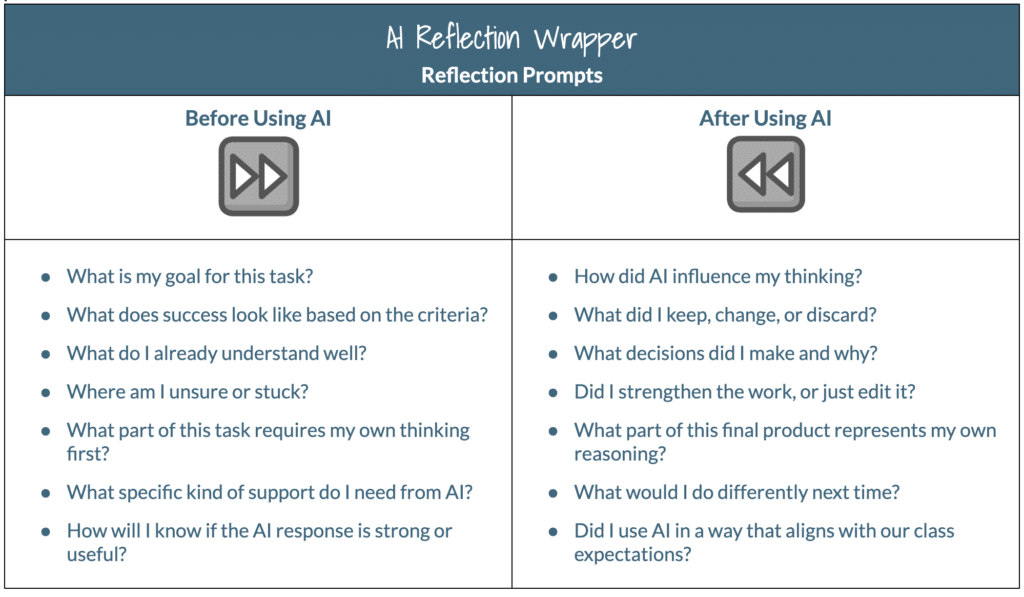

Structured reflection while using AI tools is another way to cultivate ethical awareness and accountability. When students use AI without pausing to consider its impact, they can quickly slip into passive use. Reflection helps them remain active decision-makers by encouraging them to consider how AI shaped their ideas, what they chose to accept or reject, and what thinking is theirs.

These reflective pauses help students recognize that they are ultimately responsible for the final product they submit. Instead of simply accepting AI-generated responses, students learn to evaluate them, make intentional choices, and remain accountable for their work.

One practical strategy is to use an AI Reflection Wrapper, as shown in the example below. This simple routine prompts students to pause both before and after using AI. Before using the tool, students clarify their goal, consider what they already understand, and identify where they need support. After using AI, they reflect on how the tool influenced their thinking, which ideas they accepted or rejected, and which parts of the final product reflect their own reasoning.

These Skills Matter Now More Than Ever

In an AI-rich learning environment, ethical awareness and accountability are essential. As AI tools become more capable and accessible, students must learn how to use them responsibly. They need to decide when AI supports learning and when it may interfere with it.

When teachers explicitly teach ethical awareness and accountability, students develop the habits needed to navigate AI thoughtfully. This work begins with conversations about honesty, fairness, and ownership in the early grades and evolves into expectations for transparent and responsible AI use in high school.

Students learn to question how AI influences their thinking, verify the accuracy of information, identify potential bias, acknowledge when AI contributed to their work, and remain accountable for the final product they submit.

This shift moves the conversation away from monitoring student behavior and toward cultivating responsible decision-makers. Instead of viewing AI as something students must hide or avoid, they learn that transparency, integrity, and ownership are non-negotiable parts of the learning process.

When students understand that they are responsible for their decisions, their thinking, and the impact of their work, they are better prepared to navigate an increasingly complex digital and information landscape with integrity and agency.

Skills Before Tools: A K-12 Guide to AI Implementation

If you are looking for support as you navigate these conversations about AI implementation in your school or district, you can download the Skills Before Tools: A K-12 AI Implementation Guide. The guide is designed to help teams ground AI decisions in shared language, developmental progressions, and transferable skills. I am also available to support this work through professional learning, coaching, or discussions on implementation.

No responses yet